AI literacy for kids: what a free course and a heated debate taught me

- Anthropic's free AI Fluency for Students course teaches a solid 4D framework (Delegation, Description, Discernment, Diligence) but is pitched at 16+, not primary school kids.

- When I shared the course on LinkedIn, teachers and parents split into five distinct camps, from "keep kids away from AI entirely" to "just signed up."

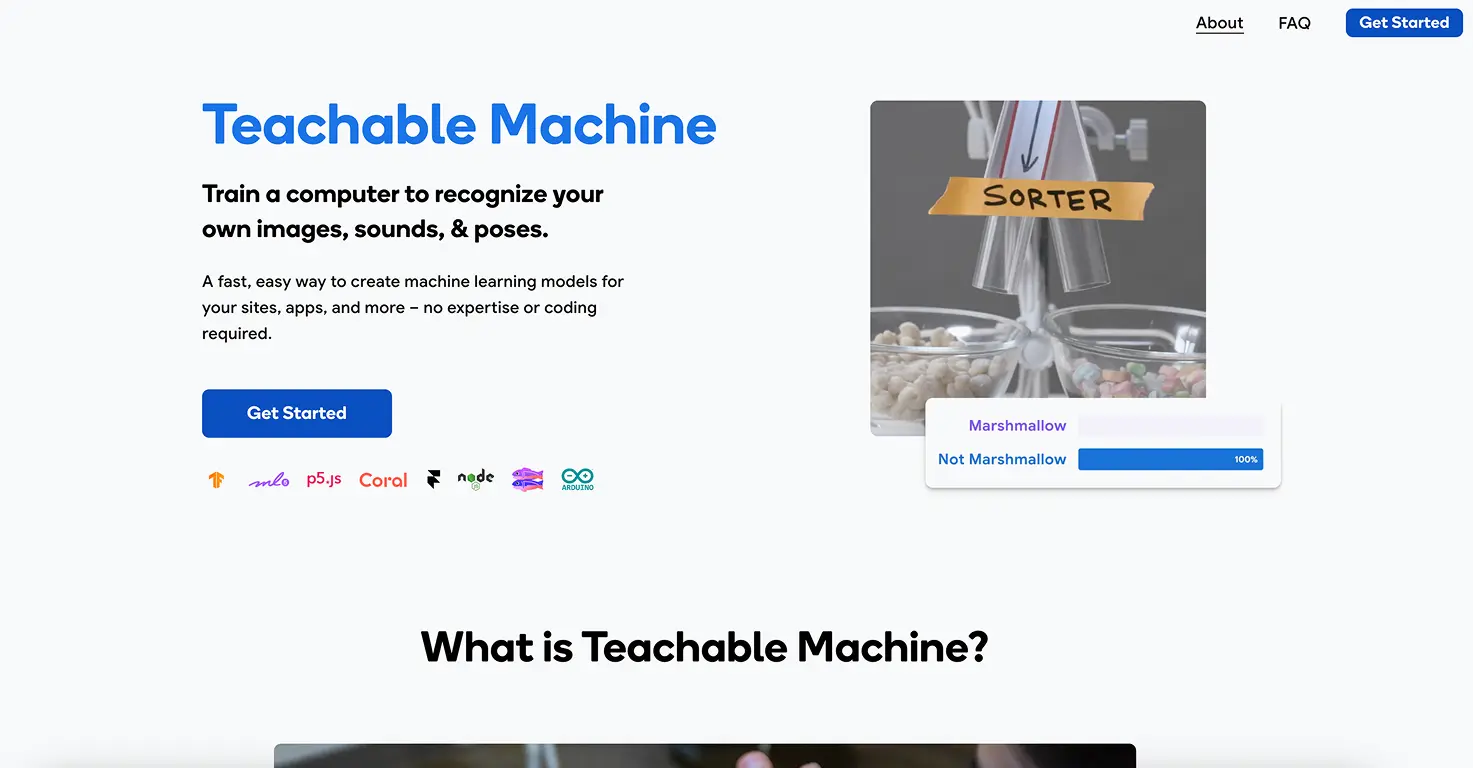

- AI literacy (understanding how AI works) matters more than AI usage (knowing how to prompt it), and hands-on tools like Teachable Machine and Quick, Draw! teach literacy better than lectures.

- The real question is not whether kids should learn AI, but whether the available courses actually meet them where they are.

Last week I shared Anthropic's new "AI Fluency for Students" course on LinkedIn. It's free, it takes 30 minutes, and it teaches a framework for using AI responsibly. I thought parents and teachers would find it useful.

The post got a lot of traction, but the comments were more interesting than the course itself. Teachers, parents, and AI researchers split into completely different camps, each with a strong opinion about whether kids should be learning AI at all.

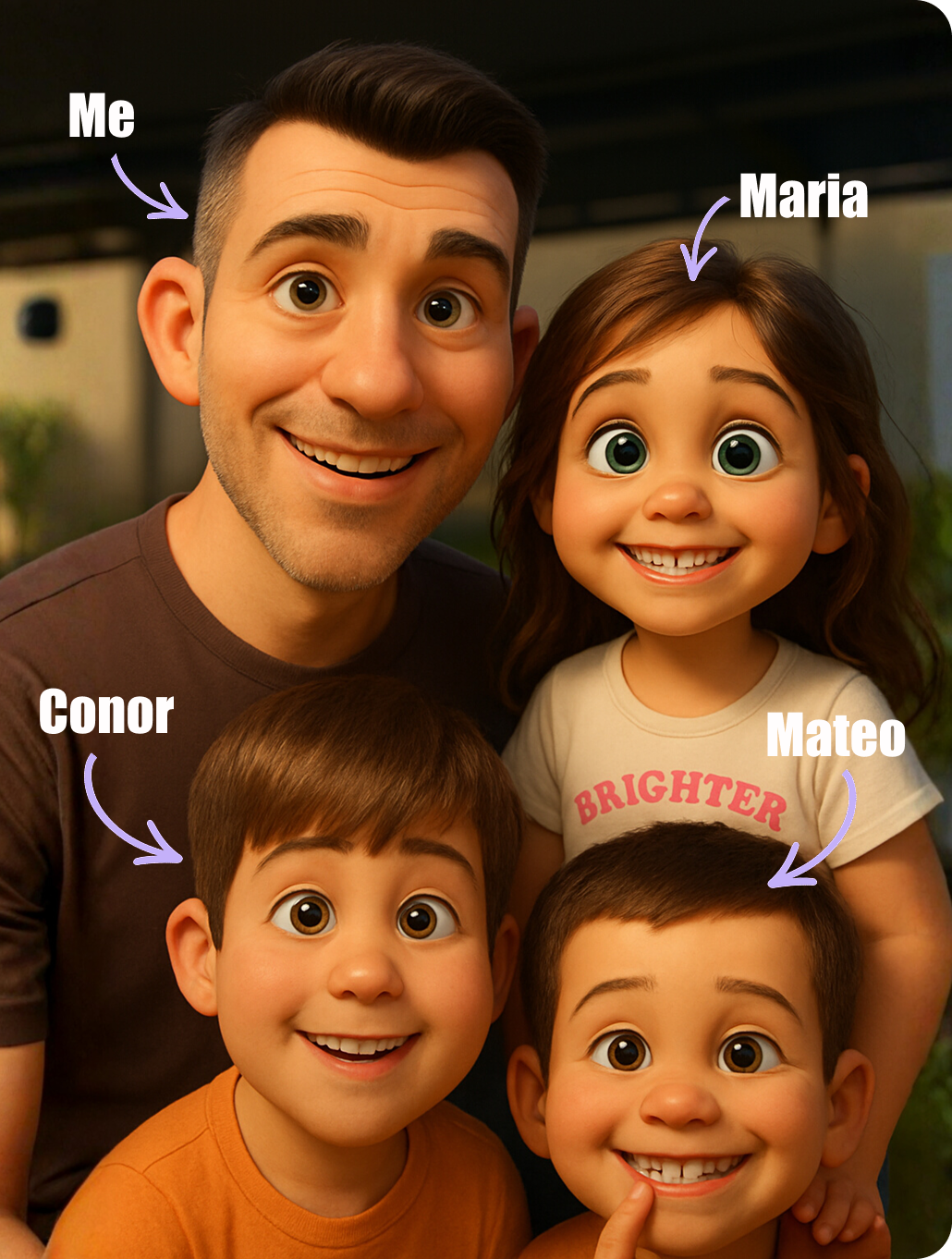

Then I sat down to do the course with my 8-year-old. Within two minutes, I had my own strong opinion. Here's what happened.

Not sure which tool is right for your child?

Take our free 2-minute quiz and get personalized AI tool recommendations based on your child's age and interests.

What the course actually teaches

Anthropic's AI Fluency for Students is a free, self-paced course with five video lectures. The core idea is something called the 4D model:

- Delegation: decide what AI should handle and what you should do yourself

- Description: learn to give AI clear, specific instructions

- Discernment: check AI's work and spot mistakes

- Diligence: be honest about when and how you used AI

The framework itself is genuinely good. These four principles are exactly what every kid needs to learn before using AI tools independently. The problem is not what the course teaches. It's who it's actually designed for.

I tried it with my 8-year-old. It didn't work.

I sat down with Mateo expecting a 30-minute session we could do together. Within two minutes it was clear this course is not for primary school kids.

Two of the five lectures are about career planning, job searching, and writing cover letters. The examples reference university assignments and professional workflows. The pacing assumes you can sit through a lecture-style video and absorb abstract concepts about "deployment diligence."

Mateo lost interest before the first video was halfway done. And honestly, I don't blame him. An 8-year-old does not need to know how to use AI to write a cover letter. He needs to know what AI is, how it learns, and why it sometimes gets things wrong.

If you have a secondary school or university-age student, the course is worth the 30 minutes. For primary school kids, it misses the mark completely.

💡 Parent Insight: The 4D framework (Delegation, Description, Discernment, Diligence) is solid. But the delivery is pitched at 16+ at the youngest. If your kid is under 12, skip the course and teach the same ideas through hands-on tools instead.

The debate: teachers and parents split into five camps

The LinkedIn post generated dozens of comments from teachers, parents, lecturers, and AI professionals. The responses clustered into five clear perspectives.

Camp 1: "Keep kids away from AI entirely"

Some commenters believe kids should spend their formative years building creativity, originality, and critical thinking without AI in the picture at all. The argument: if you introduce AI tools before kids have developed their own thinking skills, you're building on a weak foundation.

There's something to this. My 3-year-old does not need ChatGPT. But I think the line between "too early" and "too late" is different for every kid, and blanket bans ignore the fact that AI is already in their world whether we introduce it or not.

Camp 2: "These courses are corporate cover"

A PhD lecturer made a sharp point: Anthropic is an AI company offering a course about how to use AI responsibly. That's a bit like a cigarette company funding a "smoke responsibly" campaign. The argument is that these courses displace responsibility, acting more like a legal disclaimer than genuine education.

I think this is partly fair. The course does feel like it's designed to say "we tried to teach people" rather than to actually change how young people interact with AI. But the underlying ideas (check AI's work, be honest about using it) are still worth teaching, regardless of who packaged them.

Camp 3: "Teach how it works, not just how to use it"

One commenter made the distinction between Black Box AI and Glass Box AI. Black Box AI means treating the tool as a magic button: you type something in, something comes out, you don't know why. Glass Box AI means understanding what's happening inside, at least at a basic level.

This is the comment that stuck with me most. If kids learn to prompt ChatGPT without understanding what a large language model does, that's not literacy. That's button-pushing. Real AI literacy means understanding enough about how these tools work to know when to trust them and when not to.

This is exactly what tools like Teachable Machine and Quick, Draw! do well. When my 8-year-old trained Teachable Machine to recognise his toys, he wasn't just using AI. He was seeing how it learns. When something was misclassified, he figured out why (bad lighting, similar colours) and fixed it. That's Glass Box thinking, and he picked it up in 10 minutes without a single lecture.

Camp 4: "Kids are lazy anyway, this helps"

The pragmatic take: kids are already using AI to shortcut their homework. At least a course like this teaches them to do it honestly and think about what they're delegating. Meet them where they are instead of pretending AI doesn't exist.

I've seen this firsthand. Mateo once asked ChatGPT to just do his homework for him. We had a conversation about it. The 4D framework would have been useful in that moment, if it had been taught in a way an 8-year-old could understand.

Camp 5: "Just signed up!"

A literacy specialist saw the post and enrolled on the spot. Several teachers shared it with their departments. For educators working with older students, the course fills a genuine gap.

AI literacy vs AI usage: the real distinction

The debate in those comments circles around a question most parents haven't thought about yet: is there a difference between AI literacy and AI usage?

AI usage is knowing how to prompt ChatGPT, generate images with Gemini, or make a song with Suno. It's practical, it's fun, and it's what most "AI for kids" content focuses on.

AI literacy is understanding what AI is, how it learns, why it makes mistakes, and what it cannot do. It's harder to teach, less flashy, and much more important in the long run.

Most AI courses for kids, including Anthropic's, lean heavily toward usage. "Here's how to write a good prompt." "Here's how to check AI's work." That's useful, but it skips the foundation.

The tools that teach literacy best are the ones where kids can see the process, not just the output:

- Teachable Machine: kids train their own AI model and watch it learn in real time. When it gets something wrong, they can figure out why. That's literacy.

- Quick, Draw!: kids draw and watch an AI try to recognise their sketches. They see it struggle with ambiguous drawings and learn that AI "sees" differently than humans. That's literacy.

- Semantris: kids play word association games against an AI and start to understand how language models process meaning. That's literacy.

Compare that to sitting through a lecture about "deployment diligence." One approach builds understanding. The other teaches compliance.

💡 Parent Insight: If your kid can prompt ChatGPT but can't explain what a large language model does at a basic level, they have usage skills but not literacy. Both matter, but literacy is what helps them make good decisions about AI for the rest of their lives.

What actually works for primary school kids

After 500+ hours of testing AI tools with my three kids, here's what I've found teaches AI literacy best for the under-12 age group:

Let them train something. Teachable Machine is free, takes 10 minutes, and gives kids a mental model for how AI learns. My 8-year-old went from "what's AI?" to "I just taught a computer" in one session. No lectures required.

Let them see AI fail. Quick, Draw! is brilliant for this. The AI tries to guess what your kid is drawing, and it gets things hilariously wrong. That single experience teaches more about AI limitations than any course module.

Let them compare AI to themselves. When my 6-year-old makes a song with Suno, we talk about what she chose (the topic, the mood, the words) versus what the AI did (the melody, the instruments, the voice). That conversation is AI literacy happening naturally.

Skip the abstract frameworks until they're ready. The 4D model is solid for teenagers. For primary school kids, the same ideas come through in hands-on play. "Did the AI get it right?" is Discernment. "What did you tell it to do?" is Description. You don't need the vocabulary to teach the concept.

The free courses, if you want them

Despite my criticisms, the Anthropic courses are free and the framework is sound. Here's who they're actually for:

AI Fluency for Students (free, 30 minutes): best for ages 16+, university students, or young professionals. Covers the 4D framework with examples from academic and professional contexts. Take the student course.

AI Fluency for Educators (free): designed for teachers who want to integrate AI literacy into their classroom. Includes lesson planning angles and discussion frameworks. Take the educator course.

For primary school kids, skip the courses and try these instead:

- Teachable Machine: train your own AI model (free, 10 min, ages 5+)

- Quick, Draw!: draw and watch AI guess (free, 5 min, ages 4+)

- Restore Old Family Photos with Nano Banana 2: use AI image generation with a real family purpose (free, 15 min, all ages)

The bottom line

The debate under my LinkedIn post revealed something important: we don't yet agree on what AI literacy for kids should look like. Some think kids should avoid AI entirely. Some think any exposure is better than none. Some think the courses themselves are corporate PR.

After testing the Anthropic course with my 8-year-old and watching it fail to hold his attention for two minutes, I land somewhere specific: the ideas behind the course are right, but the delivery is wrong for young kids. AI literacy for primary school children needs to be hands-on, visual, and fun. It needs to happen through building and playing, not through lectures about cover letters and career planning.

The good news? The tools to do this already exist, they're free, and your kids will actually enjoy using them.

Want honest, tested reviews of AI tools for your kids? Join 150+ parents getting my weekly newsletter with tools, projects, and what actually worked with my three kids.